|

Byeongchan Kim is a Ph.D. candidate in the Graduate School of Data Science at Seoul National University, under the supervision of Prof. Min-hwan Oh. He received his M.S. in the Graduate School of Data Science under the supervision of Prof. Min-hwan Oh from Seoul National University, and a B.A. in Mathematics and Statistics from Sungkyunkwan University. His research focuses on offline reinforcement learning (RL) algorithms (e.g., model-free, model-based, goal-conditioned, diffusion, etc), kernel methods, optimization, and their various applications. |

|

|

|

|

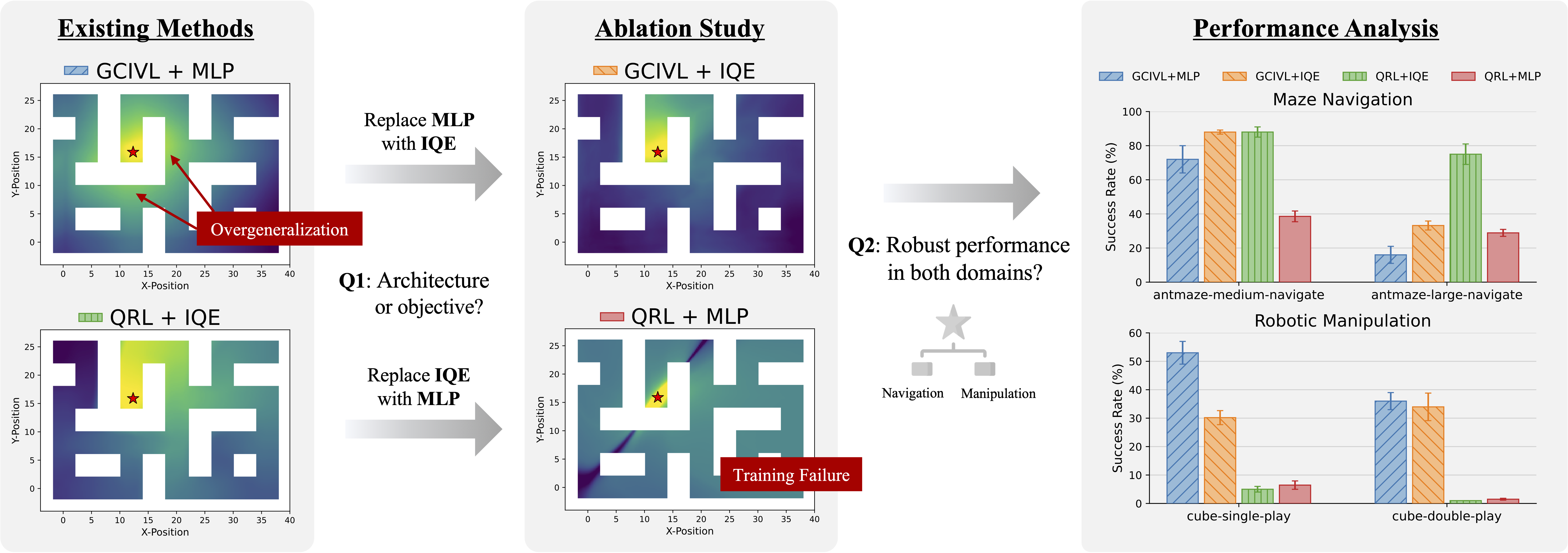

Hyungkyu Kang*, Byeongchan Kim*, Min-hwan Oh (*Equal contribution) ICML, 2026 paper / code |

|

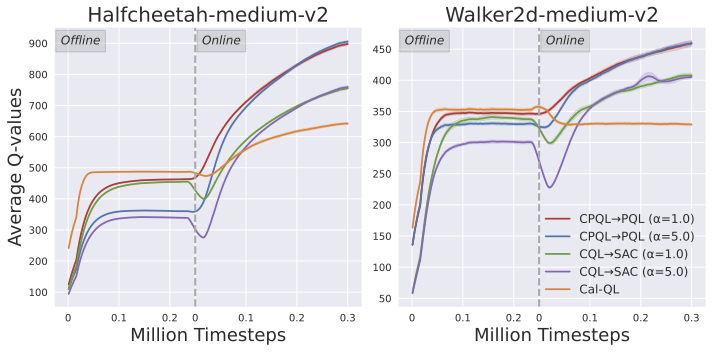

Byeongchan Kim, Min-hwan Oh ICLR, 2026 paper / code |

|

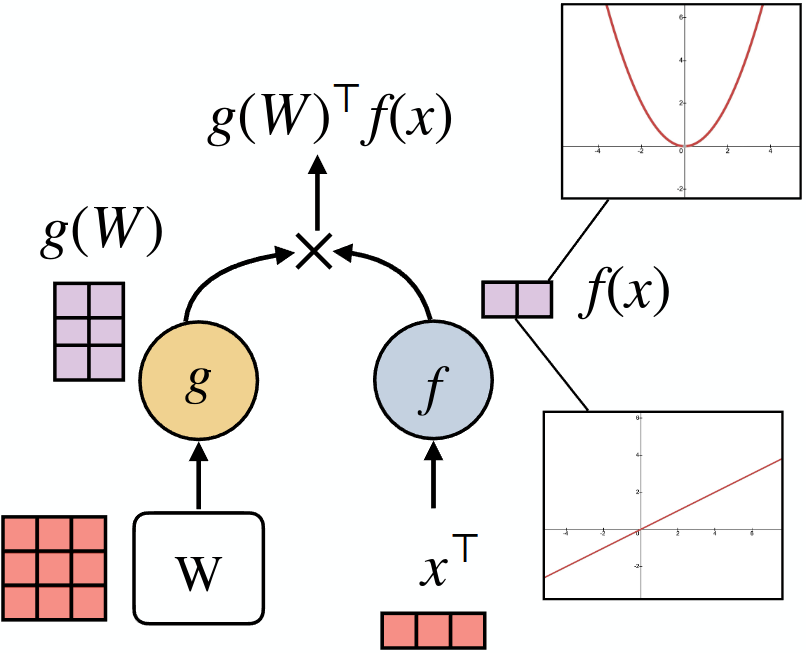

Sang Min Kim*, Byeongchan Kim*, Arijit Sehanobish*, Somnath Basu Roy Chowdhury*, Rahul Kidambi*, Dongseok Shim, Avinava Dubey*, Snigdha Chaturvedi, Min-hwan Oh, Krzysztof Choromanski* (*Equal contribution) NeurIPS, 2025 paper / code |

|

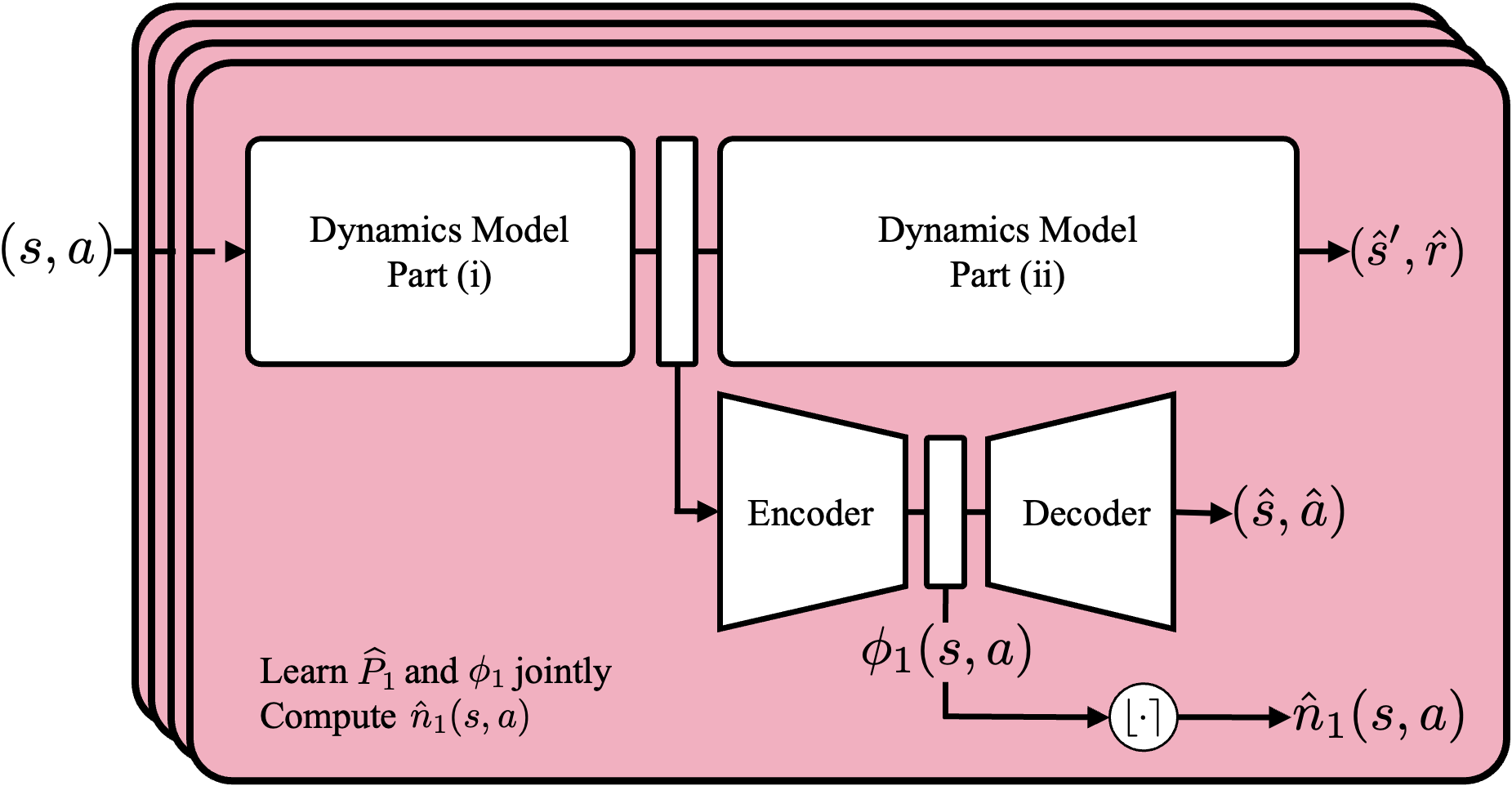

Byeongchan Kim, Min-hwan Oh ICML, 2023 (M.S. Thesis) paper / code |

|

This template is a modification to Jon Barron's website. Find the source code to my version here. Feel free to clone it for your own use while attributing the original author Jon Barron. |